Intelligent design of Bragg gratings using artificial intelligence

Fig. 1: The schematic of the parameter space, Bragg waveguide structure, and data generation process, from left to right. Through intelligent data acquisition methods, we can obtain ML models with much fewer and yet more informative data.

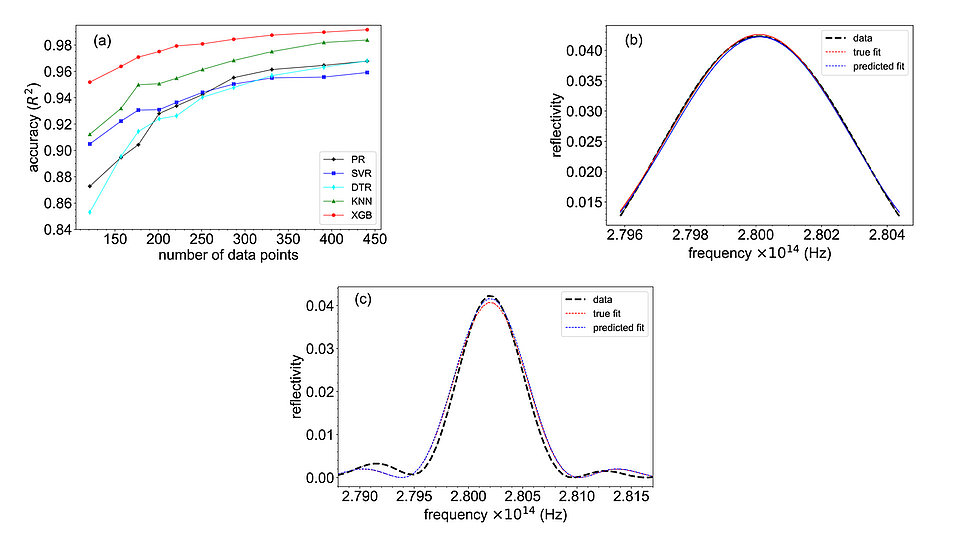

Fig. 2: (a) Accuracy, R2, as a function of database size, with the XGBoost model achieving a high R2 value of 0.997 out of 1. The ML models in the legend are polynomial regression (PR), support vector regression (SVR), decision tree regression (DTR), k-nearest neighbors (KNN) and extreme gradient boosting (XGB). (b) The predicted reflectance spectrum for a random point in the database, with only the top two-thirds predicted using a Gaussian fit (taken from [1]). (c) The predicted reflectance spectrum (main lobe and the two side lobes) for a random point in the database, with the fit done using coupled mode theory.

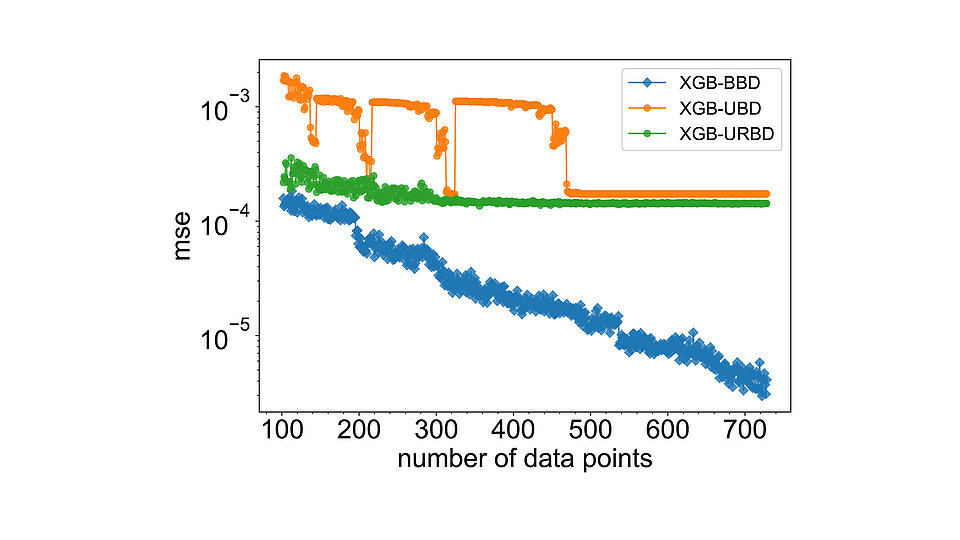

Fig. 3: Performance comparison of XGBoost ML model (XGB) trained on three different databases: BBD (Bayesian-based), UBD (uniform-based), and URBD (uniform-random-based). The mean squared error (MSE) for the XGBoost model trained on BBD continuously decreases. The detailed procedure is described in [2].

To design lasers tailored for various applications, including quantum sensing, we implement intelligent methods to obtain desired optical response (OR) of Bragg gratings within semiconductor ridge waveguides (see Fig. 1). We predict the characteristics of Bragg gratings by means of AI algorithms trained on their OR. By refining simulation accuracy, optimizing AI algorithms to predict the OR, and constructing databases effectively, our research contributes directly to the development of advanced custom-made lasers. The robustness of our results is largely due to our meticulous investigation of three critical aspects: accurate simulation for database generation, AI algorithms capable of accurate predictions while trained on limited data, and minimal yet highly informative database construction.

Accurate simulation for database generation

The accuracy of simulations employed in database creation is crucial. Recent studies in inverse design have commonly utilized deep learning models, taking advantage of cost-effective yet lower-resolution data generation methods. Recognizing the susceptibility of AI algorithms to inaccuracies in training data, we initiated the project by constructing a database through 3D FDTD simulations [1]. While resource-intensive, these simulations provide a foundation of accuracy essential for generating a reliable database. Simultaneously, this approach poses a challenge in finding AI algorithms that efficiently train on small datasets.

AI algorithms capable of accurate predictions while trained on limited data

The challenge of training AI algorithms on limited datasets requires finding algorithms capable of discerning intricate nuances within a complex, multi-dimensional parameter space. We investigated machine learning (ML) models known for their performance on small datasets. We applied different models, including optimized extreme gradient boosting (XGBoost), proven successful in domains with sparse data, such as medical studies. Our investigations revealed that XGBoost consistently outperforms other models on a database reduced to half the size [1]. This prediction accuracy, R2, as a function of database size, is plotted in Fig. 2(a), with the XGBoost model achieving the highest accuracy among all the methods compared: an R2 value of 0.997 out of 1. Fig. 2(b-c) shows the predicted reflectance spectra for a random point in the database using XGBoost, highlighting the precision of prediction. The R2=0.997 means that 99.7 % of the variance in the reflectance spectra is explained by the model, indicating that the model predictions are very close to the actual data [1]. To achieve further improvement in ML performance with a small database, it is essential that the limited data points contain maximum information and diversity to train the AI model on all possible features.

Minimal yet highly informative database construction

The acquisition of a substantial number of data points for ML model training is challenging, particularly using 3D FDTD simulations, as mentioned above. We proposed an approach to construct a minimal yet highly informative database by adding datapoints with maximum variation to the existing data. Starting from a few datapoints, randomly distributed in the parameter space, we mimic the underlying relation between output and input parameters via Gaussian process regression (GPR). GPR provides predictive means and standard deviation for unknown data-points in the entire parameter space, using limited known data. Employing Bayesian optimization, we efficiently selected new data points with maximum standard deviation, resulting in an informative database for ML model training. Comparative analysis with traditional approaches – databases generated using uniform and uniform-random distribution – highlights the superiority of the Bayesian method, achieving high accuracy with one order of magnitude fewer data-points (see Fig. 3) [2].

Looking ahead, we are currently extending our methodology beyond simulation data to incorporate experimental data-points within the complex design space. By producing informative test structures using the approach mentioned above, we aim to enrich our databases with intricacies of real-world insights. This approach not only strengthens the authenticity of our AI models, but also enables rigorous benchmarking against real devices.

Publications

[1] M. R. Mahani, Y. Rahimof, S. Wenzel, I. Nechepurenko, and A. Wicht, “Data-efficient machine learning algorithms for the design of surface Bragg gratings”, ACS Appl. Opt. Mater. 1, 1474–1484 (2023).

[2] M. R. Mahani, I. Nechepurenko, Y. Rahimof, and A. Wicht, “Optimal Data Generation in Multi-Dimensional Parameter Spaces, using Bayesian Optimization”, accepted for publication in Machine Learning: Science and Technology, 2024.